IID Cloud

IID Cloud

IID Cloud

IID Cloud

A GPU-accelerated platform built on a cloud-native architecture. It provides elastic and scalable computing power support for AI deep learning, scientific computing, HPC, and creative design, enabling supercomputing-level performance on demand.

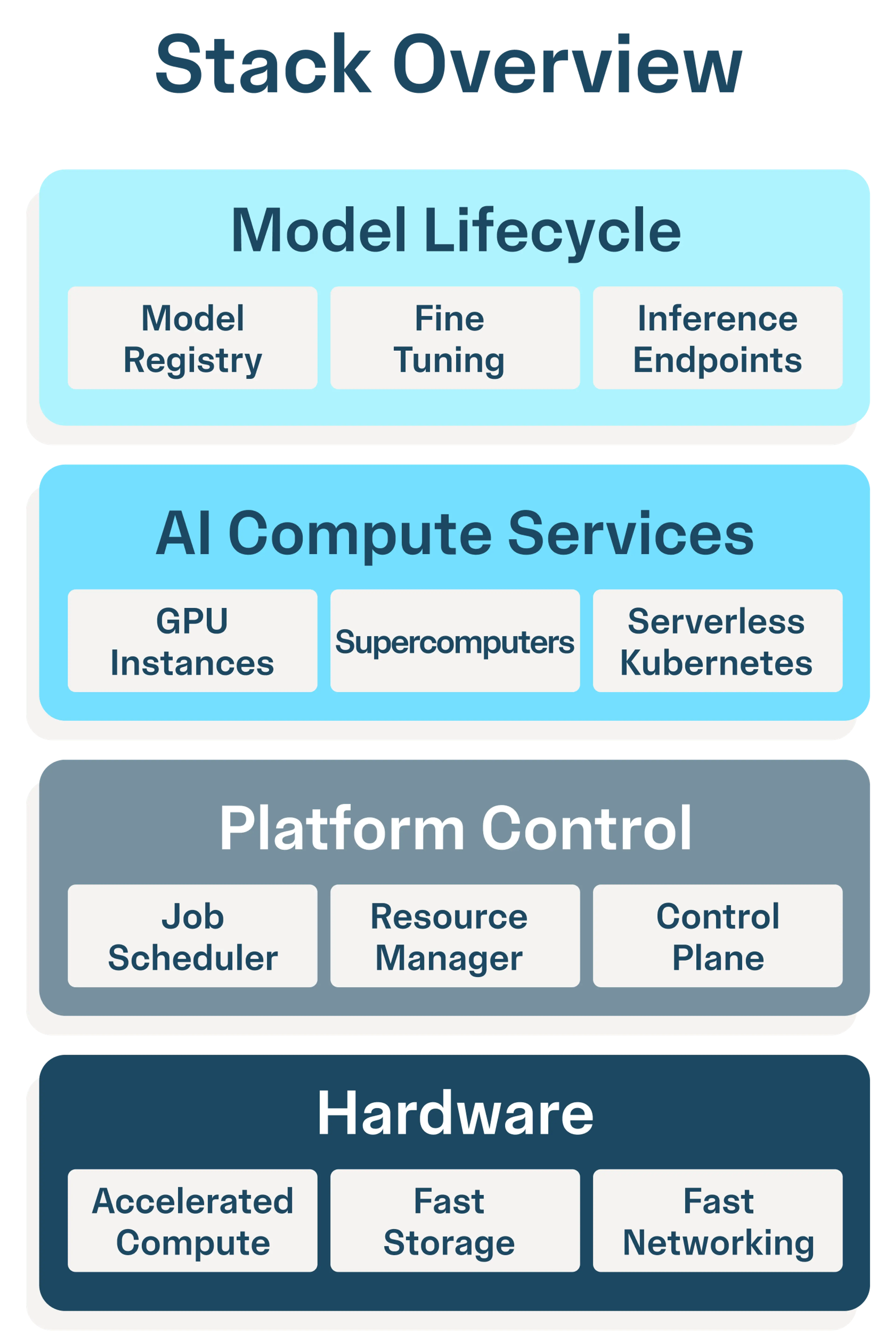

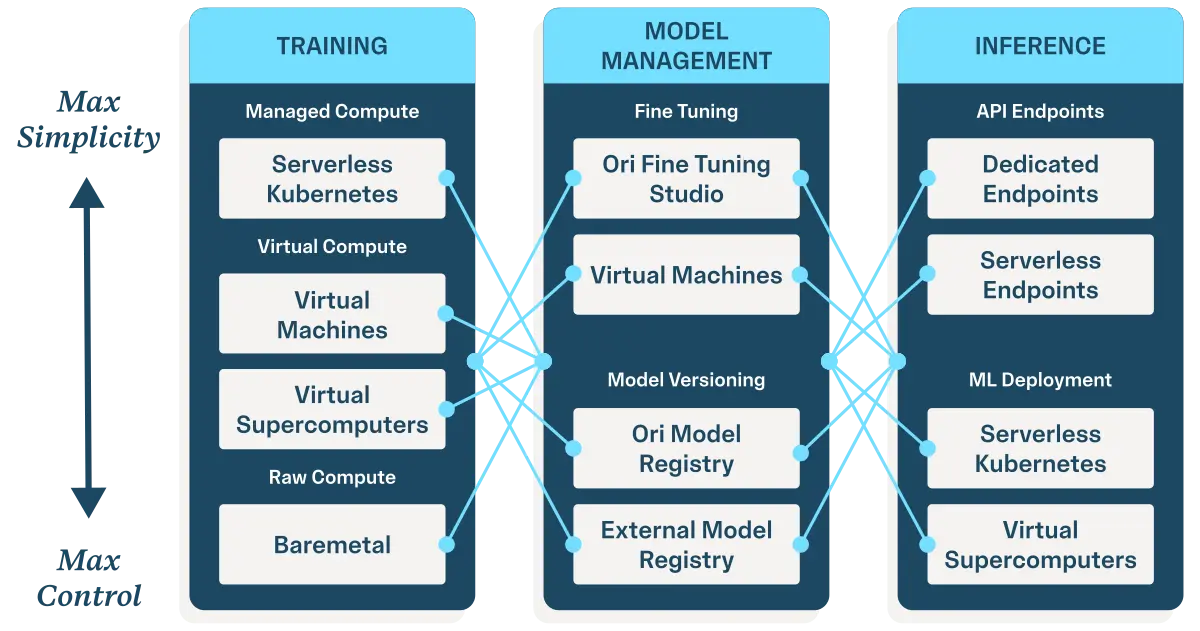

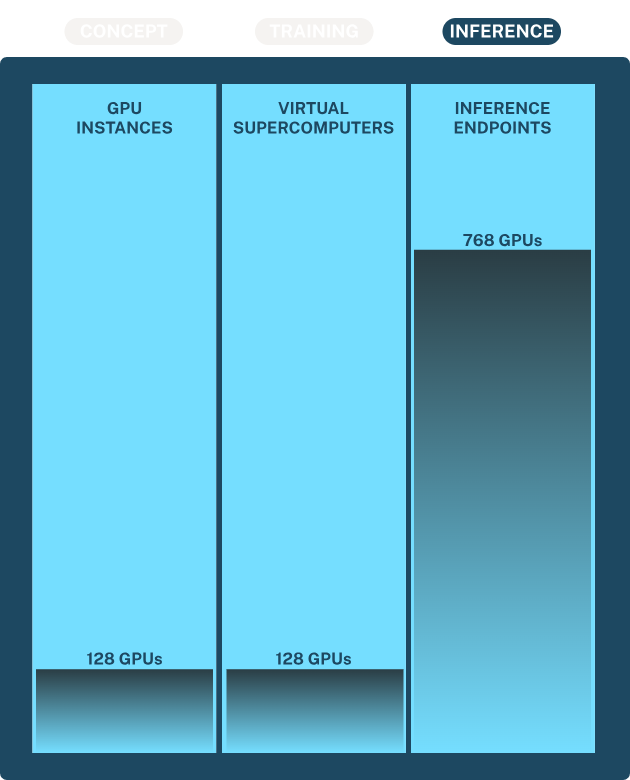

Accelerate every stage of your AI lifecycle, from first experiment to real-world impact.

IID Cloud gives you end-to-end control at scale — across compute, orchestration, and deployment — all from a single, integrated platform.

Go from prototype to production faster with integrated AI/ML tooling.

Build fast without losing control, security or compliance.

Maximize GPU utilization without compromising tenant isolation or data security.

Deploy on your infrastructure and burst to cloud when needed for scale.

One platform.

Many compute options.

Built for AI.

From bare metal to supercomputers — spin up the right compute instantly.

Tooling for training, tuning, and deployment — unified across the entire stack.

No glue code, no patchwork — just one integrated platform for AI.

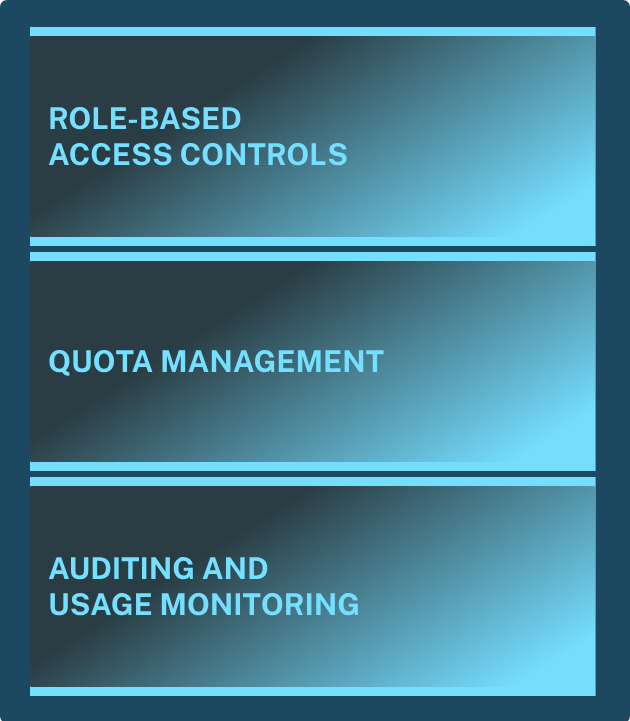

Unlock the full power of AI while maintaining control over compliance, access, and infrastructure.

Support for soft and hard tenancy with GPU/node-level isolation and network segregation.

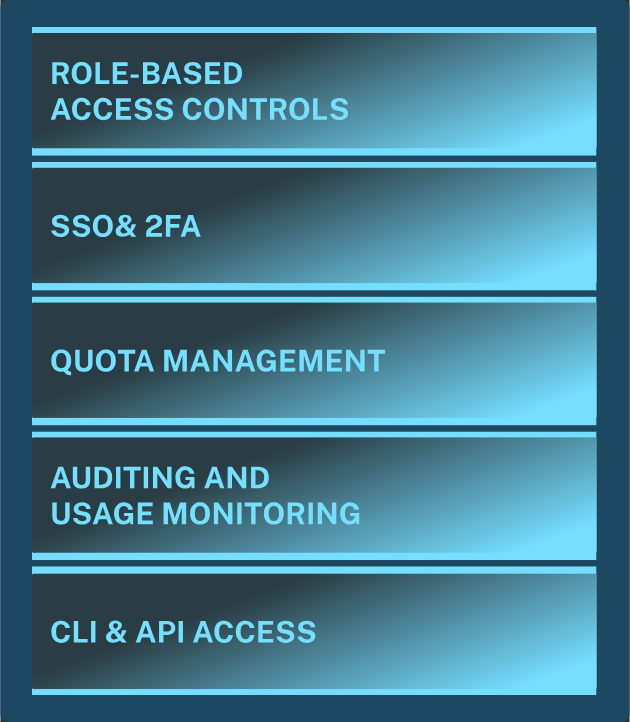

Quota management, usage tracking, exportable audit logs, and CLI/API permission sets.

Enforce SSO, 2FA, and RBAC across teams and projects, with policy-based access for every workload and interface.

SOC 2 Type II, ISO 27001, GDPR, and HIPAA-ready —supporting data residency and audit requirements across regions.

80GB VRAM | 15-core vCPUs | 180GB RAM | 300GB Storage

80GB VRAM | 15-core vCPUs | 180GB RAM | 300GB Storage

80GB VRAM | 15-core vCPUs | 180GB RAM | 300GB Storage

48GB VRAM | 15-core vCPUs | 90GB RAM | 1.6TB Storage

48GB VRAM | 15-core vCPUs | 90GB RAM | 1.6TB Storage

48GB VRAM | 15-core vCPUs | 90GB RAM | 1.6TB Storage

80GB VRAM | 30-core vCPUs | 380GB RAM | 3.84TB Storage

80GB VRAM | 30-core vCPUs | 380GB RAM | 3.84TB Storage

80GB VRAM | 30-core vCPUs | 380GB RAM | 3.84TB Storage

80GB VRAM | 30-core vCPUs | 380GB RAM | 3.84TB Storage

24GB VRAM | 22-core vCPUs | 90GB RAM | 1.6TB Storage

24GB VRAM | 22-core vCPUs | 90GB RAM | 1.6TB Storage

24GB VRAM | 22-core vCPUs | 90GB RAM | 1.6TB Storage

80GB VRAM | 24-core vCPUs | 240GB RAM | 3.0TB Storage

80GB VRAM | 24-core vCPUs | 240GB RAM | 3.0TB Storage

80GB VRAM | 24-core vCPUs | 240GB RAM | 3.0TB Storage

80GB VRAM | 24-core vCPUs | 240GB RAM | 3.0TB Storage

640GB VRAM | 192-core vCPUs | 1920GB RAM | 3.0TB Storage

640GB VRAM | 192-core vCPUs | 1920GB RAM | 3.0TB Storage

640GB VRAM | 192-core vCPUs | 1920GB RAM | 3.0TB Storage

640GB VRAM | 192-core vCPUs | 1920GB RAM | 3.0TB Storage

640GB VRAM | 192-core vCPUs | 1920GB RAM | 3.0TB Storage

141GB VRAM | 24-core vCPUs | 240GB RAM | 3.0TB Storage

141GB VRAM | 24-core vCPUs | 240GB RAM | 3.0TB Storage

141GB VRAM | 24-core vCPUs | 240GB RAM | 3.0TB Storage

141GB VRAM | 24-core vCPUs | 240GB RAM | 3.0TB Storage

141GB VRAM | 24-core vCPUs | 240GB RAM | 3.0TB Storage

We are a cloud service provider specializing in high-performance GPU computing resources, dedicated to delivering stable, efficient, and elastic computing power support for fields such as AI research, deep learning, and scientific computing. With our advanced cloud-native architecture and globally distributed data centers, we can meet computing needs of all scales—from individual developers to large enterprises.

Leveraging the latest GPU technology and cloud-native architecture to ensure high performance and reliability.

Data centers located across multiple global regions deliver low latency and high availability.

A team of senior engineers and technical experts providing 24/7 technical support.